If you have any skin invested in the high-performance computing game, you’ve almost certainly heard of the likes of MMX and SSE, the original “extensions” to the x86 assembly instruction set that provided task-specific performance-optimized instructions that let developers take advantage of specific hardware extensions to quickly perform tasks that previously required extra steps in software to compute. If you haven’t, here’s a quick briefer.

If you have any skin invested in the high-performance computing game, you’ve almost certainly heard of the likes of MMX and SSE, the original “extensions” to the x86 assembly instruction set that provided task-specific performance-optimized instructions that let developers take advantage of specific hardware extensions to quickly perform tasks that previously required extra steps in software to compute. If you haven’t, here’s a quick briefer.

The “basic” instructions supported by PCs are known as the “x86 assembly language” and is the lowest level of code available for writing software that runs on a “regular PC,” originally developed by Intel and adopted by other players in the CPU game (including AMD and the now-defunct Via CPUs). All PCs from the original Intel 8086 way back in 1978 to modern, multi-core behemoths support this language, and code written in or compiled for x86 can (in theory) run on any machine from 1978 onwards.

While the core x86 instruction language have remained mostly the same since then, manufacturers have continuously released “extensions” to the language that provide additional features or allow for faster methods of computing certain results. Perhaps the most important of these was AMD’s x86_64 extension that brought 64-bit support to an instruction set that dates back to the 16-bit era; but there are dozens more extensions out there, originally from several manufacturers, that have been adopted by the major vendors and are supported by modern compilers and software that make your PC that much faster. The first of these were the MMX “multimedia extensions” released back in 1993, that many may recognize from the “Intel Inside” adverts of yesteryear:

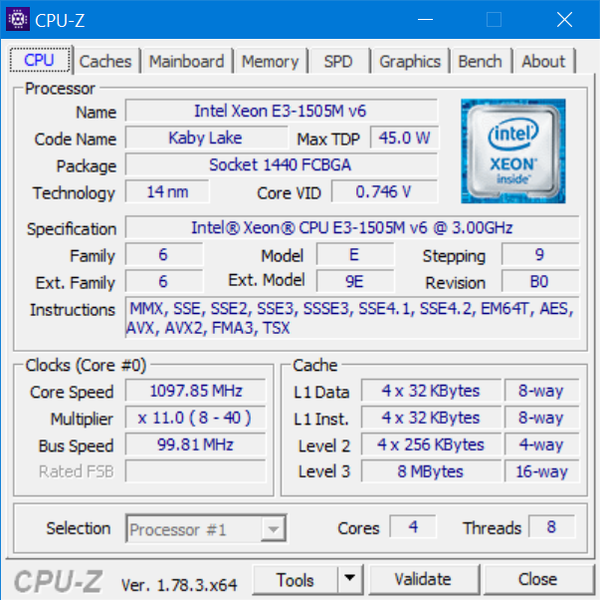

Modern CPUs can have more than a dozen such features, allowing PCs to use, where available, highly-optimized code paths to quickly perform certain CPU-bound calculations. The supported extensions can be revealed using a number of free utilities, perhaps most famous (and best) of which is the free CPU-Z utility:

While some of the original extensions such as MMX, SSE1, SSE2, and even SSE3 have become rather mainstream; the remaining extensions are normally included in software as part of an optimized codepath – the software in question tests for hardware supporting advanced extensions such as SSE4.1 or AVX, and then either uses the slow, compatible method of performing a computation or loads a module that has been compiled with optimizations supporting these platforms if they are supported by the PC in question.

One of the most important1 such recent extensions is the AES extension, which introduced a number of instructions to perform – via specialized hardware – operations pertaining to AES encryption that let PCs and servers perform things like establish encrypted connections to websites and decrypt protected content an order of magnitude faster,2 which is no small feat.

Back to the matter on hand: Intel’s SHA Extensions. Intel SHA Extensions for x86 were first introduced in 2013 with the Goldmont architecture for “budget-friendly” (aka mediocre performance) Pentium J and Celeron processors as a means to quickly calculate SHA-1 and SHA-256 rounds. SHA-1 and SHA-256 are, of course, hashing algorithms that can be used to create a cryptographically secure,3 one-way mapping between a variably-sized chunk of data and a 160- or 256-bit digest. Secure hashing is considered a very important cryptographic primitive, and forms an important part of just about any secure, validated transaction that PC code utilizes.

Like most other cryptography-related code, cryptographic hashing is a CPU-intensive operation by nature, even with ever more-performant cryptographic hashes such BLAKE2 and SHA-3 being developed, and is exactly the type of high-impact operation that would benefit greatly from hardware-accelerated calculation via extensions to the x8 programming language.

At the time of its initial release, the Intel SHA Extensions were seen as the logical next step to the 2008 AES-NI instruction set, and developers expected them to be rolled out quickly to Intel’s desktop and mobile offerings in a similar vein, but as of the time of this posting, the SHA extensions remain restricted to a very particular subset of Intel’s offerings – a subset that occupies the very lowest ranks of the performance ladder.

While the 4 years since Intel first released its SHA Extensions would easily lead one to question Intel’s commitment to this instruction set, the performance benefits of hardware-assisted SHA calculation were apparently enough for AMD to adopt the SHA extensions in its recently released Ryzen line of CPUs. The question now is whether or not this could be what Intel has been waiting for to finally roll out SHA extensions across the remainder of their product line?

Despite the extremely limited availability of SHA extension support in modern desktop and mobile processors, crypto libraries have already upstreamed support to great effect. Botan’s SHA extension patches show a significant 3x to 5x performance boost when taking advantage of the hardware extensions, and the Linux kernel itself shipped with hardware SHA support with version 4.4, bringing a very respectable 3.6x performance upgrade over the already hardware-assisted SSE3-enabled code.

With the competition heating up when it comes to specialized hardware for task-specific computing in the mobile and server market, at a time when ARM’s performance is finally catching up in some regards to a certain subset of Intel’s offerings and finally some stepped-up pressure from AMD, it seems like this might be what it takes to get Intel to bite the bullet and ship its SHA Extensions in a future (dare we say, next?) microarchitecture.

While the internet held its breath (to great disappointment) anticipating SHA support in Intel’s 2015 Skylake line of CPUs, hints were dropped early last year of possible SHA support in Intel’s Cannonlake microarchitecture, but as we all know, Cannonlake was hit by significant delays that culminated in the final end to Intel’s traditional tick-tock product cycle; so it’s anyone’s guess as to when feature’s originally slated for a late 2016 release in Cannonlake but not found in Intel’s 2017 Kaby Lake offerings will finally make their appearance.

Today, SHA extensions may only be available on the Pentium J4205, N4200 and the Celeron J3455, J3355, N3450, and N3350 CPUs as far as Intel is concerned, but users that benefit from hardware-accelerated cryptography support have more options than ever before. AMD has Intel squarely in their sights with the Ryzen release; all the released and currently available consumer Ryzen CPUs have native hardware SHA support, and their highly-anticipated Naples (codename Epyc) server chipsets due for release in Q2 2017 are sure to win them favor in the high performance, high throughput, encrypted endpoint game when they’re released in just a few months. As far as niche use is concerned, ARM’s cryptography extensions include SHA-1/SHA-2 primitives alongside the hardware-optimized AES instructions and are readily available from numerous manufacturers for specific-use servers and mobile platforms from foundries around the globe.

The ball’s in Intel’s court now. AMD and ARM seem to – for once – have flipped the tables when it comes to supporting the latest and greatest hardware extensions, being traditionally one or two release cycles behind when it comes to adding support for SSE and AVX as compared to the market leader. But perhaps Intel has something up its sleeves and we’ll be seeing a revised SHA instruction set with support for newer, unweakened/unbroken cryptographic hashes such as SHA-512 (and, by extension, SHA-512/256 and SHA-512/384) which currently can only be partially computed in hardware with the AVX2 extensions. Only time will tell.

and most contentious, with rumors and conspiracy theories regarding backdoors abound ↩

On this user’s machine,

openssl speed aes-128-cbcis approximately 14.5x slower across-the-board as compared toopenssl speed -evp aes-128-cbc, which you can test for yourself if your CPU supports the AES instruction set and you have a recent enough version of openssl with AES-NI support available. ↩Fast forward 4 years from the 2013 release date and SHA1 is now no longer considered secure while SHA256’s security has been called into question/weakened enough to recommend using SHA-512 truncated to 256 or 384 instead. ↩

Hey, just a comment on the beginning, one thing that troubled me are the different names of x86. I think it would be helpful to mention them (IA-32 is one of them, and is used by golang instead of x86)