One of the biggest, bestest, and most-hyped features of Windows Vista (according to Microsoft, that is) was the brand spanking new TCP/IP networking stack. Ask us, it sucks. Network performance hasn’t improved any over the ancient stack used in XP (nor should it – it’s not like there’s anything new in IPv4) though it does add better IPv6 support out-of-the-box and ships with some even more functionality in Windows 7. But more importantly, Microsoft threw out decades of testing and quality assurance work on the existing Networking Stack and replaced it with something rather questionable.

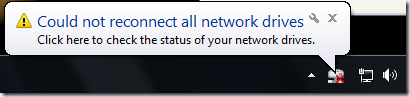

We’ll be following up some more on this topic from a technical side later in another article, but for now, an example that most of you are sure to have come across if you’ve ever tried to map network drives before:

This popup is shown at system startup if you have any mapped network drives to UNC shares which are not protected with a username and password. If you map a network destination that does require authentication, Windows will map the drive OK. To further complicate matters: this message is shown only when you startup from a cold boot! If you restart your PC (vs shutdown and powerup), it won’t appear.

Resolving the issue is straight-forward enough: just double-click on the network drive in My Computer and it’ll automatically, instantly, and silently connect. Which makes one wonder why Windows couldn’t connect in the first place.

Good question.

While working an update to Genie Timeline, I ran across this issue. Windows wouldn’t connect a mapped network destination at startup for some of our customers, meaning that our backup couldn’t continue (assuming you’re backing up to the network drive) until you manually intervened and opened the mapped drive yourself. Definitely not cool.

As an in-house R&D test, we attempted to manually re-establish the connection via the command-line. By running

net use Z: \\remote\path\

we were able to re-establish the “disconnected” network drive. But when we tried to implement this in code, we came across a funny issue. If you try to run this very early on during the logon procedure, it will fail with error code ERROR_FILE_NOT_FOUND – basically, it’s unable to contact the network path. The funny thing is, explicitly testing to see if we can connect to the network path [GetFileAttributes(networkPath)] doesn’t return any error. But Windows itself is unable to establish a connection. Using ‘net’ from the commandline was just a workaround for R&D purposes, so we turned to the trusty old WNetAddConnection2 function – and it too failed with ERROR_FILE_NOT_FOUND even though the network path both definitely existed and was perfectly accessible as a UNC location!

Attempting either of these techniques to establish a mapped network drive connection later on – say 2 or 3 minutes after logon – works just fine. As does attempting to establish a connection to a UNC path that requires authentication. Or attempting to connect to the network drive after a restart and not a cold boot.

In the end, we resorted to calling WNetAddConnection2 at timed intervals after startup if the UNC path is accessible and the mapped network drive is not. It got the job done, but it really does speak volumes when developers have to run through hoops to address issues that have been out 2 OS releases and 5 years ago. We have no such problems with Windows XP.

There is an easy fix. You have to understand how win 7 does a logon to a remote domain.

The domain name must be entered first. The default entry won’t work for remote network drives.

You have to enter things in this format:

username: RemoteDomain\username (for me it’s “\\RAIDON\admin” to connect to my NAS NOT “admin” alone)

password: password

“RemoteDomain” will show up listed under “Domain:” and if you check the remember box it will not forget them during a cold start.

BUT

IF you do not enter the remote domain, the default settings are:

username LocalMachine\username (which just looks at the win 7 machine, not the network.)

password: password

and no matter what it will forget them on the next logon as it tries to connect to localhost NOT the network drive or share.

NET USE works but if you change a drive letter later you are in trouble as it’s mappings are persistent.

Hi Ken,

Thanks for your reply. Actually, if you’ll refer to the article (the part in bold), you’ll see that this doesn’t apply in our case.

Windows doesn’t have a problem if we’re authenticating to the remote device with a username/password combination. It’s only when no authentication is required (i.e. I never even have to enter a username, and therefore your technique of always prepending the domain to the username – which is certainly always good advice – will not work) that Windows will crap out.

I map the drive manually. This is the most bizarre fix if your on a domain and have similar issues for domain network drives. It helps for the user to have admin rights so you can run things in elevated admin form.

i map the drive manually, …x: \\home\c$ /p:y

-in an elevated prompt. in windows 7 though I found on a forum to add in a command prompt then add again in elevated command prompt. not sure if this step was necessary. create a bat file as a backup.

however this left me with an issue… it kept forgetting my cached domain password each time i rebooted that matched my windows 7 login /ad account. that is why the token fix is needed. i do not have my notes handy.

general idea:

http://eightwone.wordpress.com/2010/03/22/kerberos-max-token-size/

windows xp equivalent

[HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\Lsa\Kerberos\Parameters]

“MaxPacketSize”=dword:00000001

“MaxTokenSize”=dword:0000ffff

xp equivalent:

net use x: \\client\c$ /p:y

windows 7?

net use [driveletter]: /delete

net use [driveletter]: \\server\share /user:[username] password /persistent:yes

To tackle this issue, use the MapDrive utility from here (zornsoftware.talsit.info/?p=129)

Hi tuxplorer,

Thanks for that link. MapDrive does exactly what I’m now doing in my code – but it makes the features user-accessible. I’ll definitely be using it for my own, individual needs. But the similar workaround that I’m using in GTL is working fine and dandy now. Thanks 🙂

Hopefully someone is still reading this tread…

Has anyone run into a problem where a mapped network drive connects fine and works for a while. Then, sometime later, you go to open the drive, either though My Computer or from a shortcut and it just hangs. This locks up explorer and the only cure is to reboot the computer. Unfortunately, in my case, when the computer goes to shut down, it hangs on “Logging Off” and will never shut down. I have to hold the power button and force it. I have not found any correlation to software I use, but I suppose that’s possible.

Here are the specs:

Gateway PC with AMD Phenom II 2.6 GHz

8.00 GB ram

Windows 7 64-bit

I run Adobe Flash and Mozilla Firefox mostly, with some other smaller programs mixed in. As I said, I don’t know if software has anything to do with it, but I can’t find any mention of this particular problem online, so hopefully one of you has heard of something.

Thanks,

Brian

The “could not connect” error you’re getting on startup is a timing issue… It’s trying to re-connect a mapped drive before it’s connected to the network itself. Once it does connect to the network, your drive works fine.

I ran into this with a piece of software I’ve been working on. It will launch all the startup programs *before* it connects to the network. XP doesn’t do this. So I had to add a test in my software to ensure the network was live (IsNetworkAlive) before I started trying to connect to stuff. Once I did that, everything was fine.

But why map network drives? Really… just create a folder in your user area and put all your share-level shortcuts in it. Most programs newer than Win3.1 will recognize UNC pathnames to their files…

commontater, that does not explain why it only affects *password-protected* network shares. Put two different shares, and only one of them is protected against anonymous access, and you’ll see what this post is complaining about.

While “most programs newer than Win3.1” will recognize UNC paths, Windows itself won’t. cmd.exe does not understand network shares.

Odd… I just opened a command prompt in Win7 (professional) and did

and it worked just fine. (AMPS is an HTPC, on my lan)

OOPS again… it seems this blog won’t accept the double backslash… sorry for the multiple postings. You can take out these last two if you like.

[ MQ: No problem, fixed it for you 🙂 ]

Can you do

?

No the CD didn’t work… but I didn’t expect it to.

Copy works. Del works.

I also do my backups using batch files with UNC paths and XCopy… this one actually works under XP as well.

It may just be that it’s trying to connect to the protected share before the network is up and running… At least that was the problem in my case. Oddly it worked on the machines I have on fixed IPs but not on the ones using DHCP (which takes longer).

http://msdn.microsoft.com/en-us/library/aa377522(VS.85).aspx

http://msdn.microsoft.com/en-us/library/aa376851(v=VS.85).aspx

These might help you…

You CAN do

pushd \\amps\movies\

Just solved it. In our case, scripting connection refreshes does not work, as we use folder redirection for roaming users. The problem is that practically everywhere we use DFS with the old style UNC with the bare domain name

eg \\ourdomain\shares\apps or \\ourdomain\shares\users

Windows 7 takes about 5 minutes after startup to realize that it can use the bare domain name. Before this it only understands ourdomain.local.

2 ways to fix it:

– in TCPv4 settings/advanced/DNS put local and ourdomain.local into the domain suffix search list.

– do the same using Group policy->Computer Configuration-> Administrative Templates/network/DNS Client/ DNS Suffix Search List.

Have a nice day!

So what do you do if you are on a workgroup?

Having same issues — but thought I’d pipe in on the DOS or Command Prompt commands. (And reveal my age in the process). Back in the old days when a “mouse” was a cat’s toy, a “registry” was something you sign at a funeral, and a re”GUI” was the last name of an exceptionally unfortunate inchworm in a children’s book, we concerned ourselves with “DOS commands.”

One thing to keep in mind is that in the old days, (pre-NT), the command prompt was the base layer of human interface with the machine. All applications, etc., ran “on top” of the command prompt “layer.” All applications had to answer ultimately to that “layer.” Not so today. Today, the “command prompt” is just another application providing an interface to the system. (That’s one reason it’s no longer called a DOS prompt). It is important to keep this distinction in mind if you’re still relying on environment variables, path entries, batch files, parameter redirects, etc.

There are “internal” and “external” commands available from a command prompt. Although the “original” PC-DOS/MS-DOS was not necessarily implemented in “layers,” it helps to think about it that way. So “underneath” the command prompt interface is the systems operating software that implements the interface. Today that is the executable cmd.exe. That executable implementing the command prompt interface contains a set of commands that are available to every single instance of the command prompt window (or the DOS prompt, the pre-“Windows” user interface). “cd” above is a perfect example. “Change Directory.” When you enter “cd” at the prompt, followed by a set of attributes, it is cmd.exe that carries out that command. It is a “built-in” or “internal” command that is available within the same instance that implements the command prompt.

You will recall that in the old days, this was how one launched an application. For example, to launch the Word Perfect 5.1 application, from the DOS prompt, you would enter “wp” or “wp.exe”. The “internal” executable implementing the DOS prompt contained a routine that said, “If the user’s command matches one of the built-in commands, then call that routine and execute it. Otherwise, go out and look through the files on this directory on this disk drive, and if there is an executable file that matches the name that the user entered, then execute the stack of instructions in that executable file.”

So even though you can enter the command “wp” or “wp.exe” from the DOS prompt, (or “Command Prompt”), “wp” is not a DOS Command. Sure, the “command” will cause the computer to launch the application, but it is not one of those commands that are internal to the operating system, contained in cmd.exe. (Or the other system files such as sys.exe, for those of you who are as old as I yet still remember enough to know that the Word Perfect 5.1 executable was actually wp51.exe, not wp.exe).

Just like wp.exe is an application, such that if you were to enter “wp.exe” from the command prompt, you would execute the Word Perfect application, there were also “utility” applications that came along with the command prompt executables as part of the MS-DOS/PC-DOS install. One such application is xcopy.exe. We have all used the command xcopy to copy directories of files, or directory structures, or to copy and replace only those files that have a later date than the matching ones in the target directory, or to recurse down the directory tree, etc., etc. Only that’s really a kind of lie. No one has ever actually used the xcopy command, because xcopy IS NOT A COMMAND. Xcopy IS AN APPLICATION. The actual command that we use is the command to call the xcopy.exe instruction stack, pass any parameters to it, then turn over control and execute the instruction. To the computer — to the CPU and to the command prompt — xcopy.exe is no different from wp.exe. It’s an application, either of which is invoked by entering the name of its executable from the command prompt.

So, what??? To the user, as long as “xcopy” runs and works, and as long as “cd” runs and works, then why should the user care whether the instruction stack is contained in the same executable that implements the command prompt, or whether the instruction stack is located in another executable file, (or dynamic link library, “dll”)? Ultimately the instruction stack will be fed to the CPU and will be executed all the same, right?

Wrong. (Well, not entirely wrong, but not exactly right, either). True, the instructions are going to be fed to the CPU, and the CPU does not give a flying flip whether the instructions were previously interpreted or compiled and queued from an executable on the drive, or whether they were read from one file on a disk or were already “resident” in volatile memory. THERE IS A DIFFERENCE, HOWEVER, in what OTHER FEATURES OF THE OPERATING SYSTEM OR THE ENVIRONMENT ARE AVAILABLE to the instruction stack.

Recall the discussion of layers of the operating system — we can think of them as “kernels” even though MS-DOS/PC-DOS does not perfectly adhere to the kernel model. Cmd.exe is going to implement the command prompt. ALTHOUGH TODAY cmd.exe is just a “shell,” or on the same level as any other application, IN THE OLD DAYS, THAT WAS NOT SO. In the old days, the computer HAD to load and execute the operating system and the command prompt (DOS prompt) BEFORE IT WAS ABLE TO LAUNCH ANOTHER APPLICATION. Indeed, the only way to launch an application was to invoke the stack of executable instructions by entering the name of the file containing those instructions from the command prompt. (For the parsimonious purists, yes there were other ways, but those are not part of the “end-user” computing environment or interface).

Also in the old days, (the early 1980s), microcomputers (if that word is still in use) or “personal computers” were mostly intended to be used as stand-alone computers. They were not devices to “network” together. They did not ship with an Ethernet NIC, and even telephone MoDems (or “couplers” if you will admit to being so ancient to recall such devices) were external boxes that connected to the personal computer through either a 9-pin or 9-pin DIN or DB-25 serial connector. (Which were as overpriced in retail then as their counterparts are today).

So when “local networking” came out, it was virtually all ala Novell. The network was installed as an application, residing ABOVE the operating system. Novell Netware was a set of executables/dll’s, etc., that you invoked AFTER you booted and the command prompt was available. Only THEN could you “see” other computers, other network devices.

So therein is the big difference — albeit an historical one today — between “internal” and “external” command prompt commands. Because the “internal” commands — for the purpose of explanation, those commands that were inside cmd.exe — had to be up and running before you could even establish network connections, those internal commands did not have any features to access the network. The thought of doing so would be equivalent to saying, “I want to build in some programming code in the ‘change directory’ or ‘cd’ command, so that it can access Word Perfect 5.1 or Lotus 1-2-3.” Well, EVEN IF every computer did have those two applications — and they did not — there would be no way for the internal DOS command to “interface” those applications, because at the time the DOS commands were available to the end user, those applications were not being executed. (Of course, if you had a way to pre-load the directory where those executables were located, then the DOS commands could interact with them by automatically finding the files and executing them, THEN interacting with them — and THUS began the curse (or the blessing) of environment variables, cfg and ini files, dlls, and eventually the registry).

So what does this all mean, and why did I post it here? UNC is fundamentally a networking convention, which was not historically a component of systems software kernels up through and including the command prompt. So even though Microsoft COULD rewrite cmd.exe so that the commands will access other components of the operating system to resolve UNC pointers, it just simply has not done so. THE EXTERNAL COMMANDS, on the other hand, such as xcopy.exe (which can be invoked with just “xcopy” without the exe extension), is an application “external” to cmd.exe. Under the old architecture, then, xcopy.exe can have access to any network application that might be “running” or “memory resident,” because it is only going to be executed with reference to a network AFTER the network application is “started”; whereas the cd command that is “internal” — or part of the cmd.com — has to be up and running before there is even the opportunity to start the networking application — or to “load” it in memory so that it is “memory resident,” akin to “services” today.

So for these historical reasons, “internal” commands will not be able to resolve UNC pointers, but “external” commands will. I would wonder whether “DIR” has become an external command. Xcopy is merely an application like any other, and it is only “bundled with” and “considered” part of the command prompt because it is an application that accomplishes things similar to the types of things you would manage with the internal commands. In other words, it is categorized with the command prompt internal commands because of its function, not because of the mechanics of how, when, or from where it is executed. (Note that I have also used “exe” as a synonym with “com” and other types of “executables,” even though there is a difference that is not relevant for the purpose of this discussion, lest we were to go deeper).

Also note that for historical reasons — that also explain why “command prompt” controlled what was referred to as the “Disk Operating System” — all the commands were executed “from” a pointer to a particular disk volume and directory on that volume. That is how we got the convention of drive letters. Windows — and other operating systems for that matter — could very easily dispose of the concept of drive letters. From a DOS prompt, they gave users the false impression that there is some identity between the processor and the physical disk drive or logical volume/directory. When we began remote processing from a LAN of computers, the concept that I had to explain, over and over, and the vast majority of “power users” still never understood, is the distinction between a process and a “computer,” where “computer” in their minds was their physical disk drives that “ran” their programs. It emotionally disturbed some of them to learn that changing disk drives and directories at their DOS prompt was not changing “where they are,” or where a command or application would be “run from,” but they were merely setting and resetting a pointer to the “default” path for the computer to use if they do not fully specify one in their commands, and that the actual “program” or “command” or “application” would be run from the same computer, or “CPU,” regardless of the path that is showing at their command prompt, (assuming the existence of the line ubiquitous in autoexec.bat files: PROMPT $P$G> ). The convention obstructs most users from thinking in two dimensions — location of data on a volume (where “data” includes input stacks), versus the location of an active process. I am not sure many of them really understood why their “personal drive” is Y: when they run the program locally, but when they “remote submit” the program to the computer named “Bernie” in the basement, they have to call their same “personal drive” on “their” computer (sic- it was a mapped network share) something like “\\LocalFileServ\EE\secured\%subUsrDept%\%subUserNm%\”. And that is not even to get to the confusion and havoc from the concept that “Bernie” is a computer, just like any other computer, and by calling it a “server” we do not mean that it is somehow different than any other computer, but we are only describing the purpose to which that particular computer is devoted in our network.

It would be more accurate to have a prompt that reported the COMPUTERNAME

Every time I try to execute an installer over my local network, from a Win 7 machine, it locks up the Win 7 machine with the only solution to do a hard reset. I have tried to condition myself to avoid it, but every now and then (like just now) I forget and the result is a frozen Win 7 box. This happens on MULTIPLE Win 7 clients, but does not happen for XP clients. M$ seems to have screwed the pooch somewhere.

The network paths I am using are not mapped, just UNC, and the host is a Win 7 x64 machine. I can’t help but think that there is some serious problem when a Win 7 client machine is guranteed to lock up (won’t even respond to CTRL-ALT-DEL) just by executing and installer from a UNC path. This has to be one of the most annoying “features” of Win 7, period.

So far I have not been able to find a solution to this. 🙁

Keith, you have to wait a very long time – it’ll “un-lock up” in the end. The reason for this is that Microsoft calculates the hash of the SHA hash file to determine whether or not it’s signed before running it. For large files, this can take several minutes.

Hi Mahmoud,

I’ll have to be more patient and see if it does un-lock next time, however, last night I had this lock up more than 3 times (since I was testing ways to try to resolve the issue) and the file I was trying to execute was only a 2Mb setup.exe. Over a 1000Mb network connection surely it cannot take more than a couple of seconds?

Regards,

Keith.

To Mr. Scott Rodgers,

I read your post and came to the conclusion that we are age mates but that you know significantly more about computers than I do. However, based upon what little I do know, your post was one of the best (if not the best), explanations of the transition from DOS to Windows (and the significance of it) that I’ve ever seen. Thanks and kudos to you, sir.

To all,

I’m having the “Could not connect all network drives” problem ICW a DNS323 NAS. Earlier this afternoon, I managed to get the NAS remapped using the “discovery while fumbling” technique. I disconnected the Ethernet feed to my Linksys E3000 with the intent of shutting down Zone Alarm. In the heat of the battle, I forgot to turn off ZA but I did attempt to remap the NAS (from Easy Search) and it worked like a charm. I reconnected the Ethernet cable, checked the “Computer” window and the NAS was still there. Because I didn’t actually fix anything, how long it will remain detectable is anyone’s guess. The chances are good that the next cold boot will send the DNS323 back into Never Never Land.

The only reason I mention the incident is in the hope that it might provide a clue, for someone more knowledgeable than myself, as to the root cause of this trouble.

Hi all, just interesting to see how many of you participating this forum. I am dealing with similar issues onto our network (mix of Win7 and XP), where users can’t access network drives in explorer, but using the UNC path without a domain (f.e.: \\share\dfs\folder) it works. However, the default mapping containing ‘.local’ does not work (sometimes). Hope to get the golden tip soon, with regards, Filip

Filip, you should probably check your DNS settings and especially the default lookup domain configuration. If possible, use the AD server as the DNS machine as well, I have found that it eliminates the need to configure certain tricky minutiae.

I have had success with no unc and the save cred argument, eg:

net use x: \\ip of server\share_name /savecred

note the space after the drive letter’s colon.

Create this bat with your data and place in the startup folder.

@echo off

set delay=20

ping localhost -n %delay%

net use X: “\\NAS310\X”

net use Z: “\\NAS310\Z”